This post focuses on observability, but this time from the business domain perspective: Metrics and Business KPIs. We will use AWS Custom CloudWatch metrics.

What makes an AWS Lambda handler resilient, traceable, and easy to maintain? How do you write such a code?

In this blog series, I’ll attempt to answer these questions by sharing my knowledge and AWS Lambda best practices, so you won’t make the mistakes I once did.

- This blog series progressively introduces best practices and utilities by adding one utility at a time.

- Part 1 focuses on Logging.

- Part 2 focuses on Observability: monitoring and tracing.

- Part 4 focused on Environment Variables.

- Part 5 focused on Input Validation.

- Part 6 focused on Configuration and Feature Flags.

- Part 7 focused on how to start your own Serverless service in two clicks.

- Part 8focused on AWS CDK Best Practices.

I’ll provide a working, open-source AWS Lambda handler template Python project.

This handler embodies Serverless best practices and has all the bells and whistles for a proper production-ready handler.

During this blog series, I’ll cover logging, observability, input validation, features flags, dynamic configuration, and how to use environment variables safely.

While the code examples are written in Python, the principles are valid for all programming languages supported by AWS Lambda functions.

You can find all examples at this GitHub repository, including CDK deployment code.

Why Should You Care About Metrics and KPIs?

“Organizations can combine business context with full stack application analytics and performance to understand real-time business impact, improve conversion optimization, ensure that software releases meet expected business goals, and confirm that the organization is adhering to internal and external SLAs.” — as described in the Dynatrace observability blog post.

Business metrics, as stated above, can drive your business forward towards success.

Metrics consist of a key and a value; A value can be a number, percentage, rate, or any other unit. Typical metrics are the number of sessions, users, error rate, number of views, etc.

KPIs, key performance indicators, are "special" metrics that, in theory, can predict the success of your service. KPIs are strategically designed to support the business use case. They require a deep understanding of your business and users and, as such, require careful definition. KPIs serve as a means to predict the future of the business.

Fail to meet well-defined KPIs, and see your service fail. Succeed to meet your KPIs, and your service will have better chances of succeeding.

Different services define different metrics as KPIs. A blog might define KPIs as returning visitors, subscribers amount, and bounce rate. These metrics tell whether the blog is gaining traction and generating consistent subscriber leads.

Other non-KPIs metrics are beneficial too. These are metrics that display the past and present. For example, usage metrics can make you realize you have spent a lot of time on a feature that most users don't care about. They can help you shift your focus to what matters, and that, in turn, improves the KPIs and your service chances of success.

Now that we have a clear understanding of why we require business domain metrics, let's discuss the how.

Implementation

As with other AWS built-in metrics, we want to monitor them in AWS CloudWatch.

We want to be able to create alarms and display the values in dashboards.

Let's assume that our example handler, 'my_handler,' is generating the KPI — 'valid events count.' It increments the metric by 1 for each event that passes input validation. Since the current handler example has no input validation yet (will be added in part 5 of the series), all input events are always considered valid and counted by this metric.

Custom metrics behave just like any other built-in AWS CloudWatch services metrics.

All that's left is to add the code that sends the metric and define proper role permissions. The required permissions are 'cloudwatch: PutMetricData', which must be added to your AWS Lambda function role.

As for the code, AWS Lambda Powertools has a utility that does just that: the Metrics utility.

Before we dive into the code example, let's go over some AWS CloudWatch metrics terminology.

A Namespace contains at least one dimension. A dimension is consisted of a key and a value and holds one or more metrics. Metrics have a key, unit type, and value.

There are several unit types: count, rate, percent, seconds, bytes, etc.

Namespace ---> [ Dimensions (key, value) ] ---> [ Metrics (key, value of unit type) ]

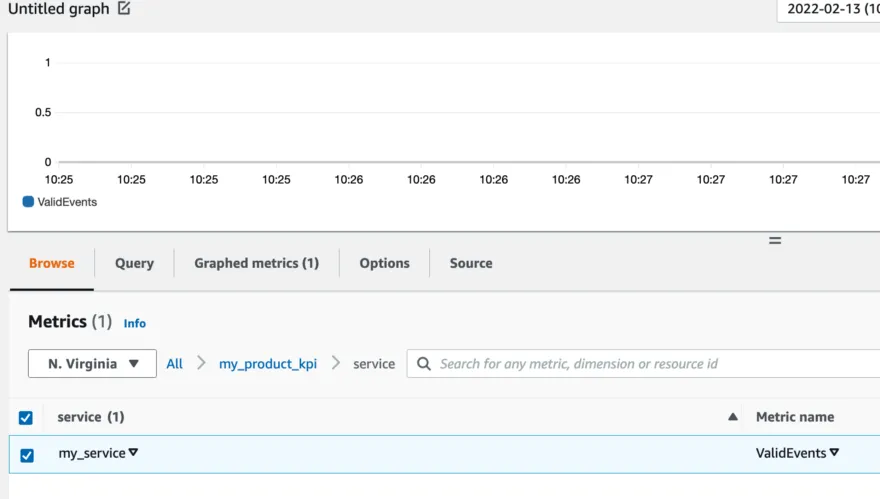

The picture below depicts the metrics hierarchy we wish to define:

The namespace 'my_product_kpi' holds a dimension with a key of 'service' and value of 'my_service.' This dimension contains a metric with a key 'ValidEvents' of unit type numerical.

Putting it all together

Before we dive headfirst into the code, I’d like to point out that a general rule of thumb is to add business KPI metrics later during your service development. Requirements and KPIs will be more apparent as you get closer to the finish line.

Let’s add the utility of the metrics to the logger and tracer utilities already implemented in the previous blogs.

You can find all code examples at the AWS Lambda Handler Cookbook GitHub repository.

In line 14, we create a global instance of the Metrics utility. The namespace is defined as ‘my_product_kpi.’

And finally, the complete version of ’my_handler.py’:

In line 8, we import the global metrics utility.

In line 16, we add a metrics decorator, ‘log_metrics.’ This decorator validates our generated metrics. Each metric has a metric unit that defines a type and a value, and they must match (numerical unit types matches a numerical value, etc.). The decorator also serializes and sends the metrics to AWS CloudWatch at the end of every invocation.

In line 23, we add the metric ‘ValidEvents’ and a count of 1 because the handler got one valid event.

That’s it! The handler now has built-in logging, tracing, and metrics capabilities, and you can now monitor the business KPIs.

Coming Next

This concludes the third part of the series. Join me for the next part, where I parse and validate AWS Lambda environment variables.

Special thanks go to:

- Ben Heymink

- Alexy Grabov

- Yaara Gradovitch